Swift Regex Deep Dive

iOS MacOur introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

I’ve got a bug I’m trying to fix. We’ve all got bugs we’re trying to

fix. I’ve got a bunch of bug-fixing tools at my disposal that I’ve written about

in my thoughts on

debugging

and elsewhere on this blog. One of my favorite tools is asking

questions.

A recent experience made me think: is

it possible to ask a question that leads you directly to a solution?

If so, how do you find those questions to ask them? It’s a meta-question.

At the Ranch we use Slack for a lot of our internal

communication. Sometimes it’s work-related channels dedicated to a

project, and we have for-fun channels related to hobbies such as yoga or

Pokemon. We also have a channel for iOS/macOS/tvOS/watchOS programming.

A common occurrence is “I’m having trouble with this API—any ideas?”

Sometimes it leads to a pairing session if it’s something weird or

nasty. Sometimes there’s a history lesson if someone knows why an API

is behaving oddly. Usually a back and forth happens, for getting more

details about the problem.

One day last summer, one of the nerds was having a problem with images on iOS:

Joseph: why would

UIGraphicsGetImageFromCurrentImageContext()

returnnilif I have just created a new bitmap graphics context

viaUIGraphicsBeginImageContext(size)? I’m playing with Xcode 8b2Joseph: okay, wrong question. why would

UIGraphicsBeginImageContext(size)fail?UIGraphicsGetCurrentContext()is returning nilJoseph: nvm, I’m changing professions.

MarkD: heh. how big is the size?

MarkD: (and if it’s iOS 10 only, there’s a new

UIGraphicsRendererpile of classes)Joseph: shakes his head. How do you do that?

MarkD: do what?

Joseph: size is 0,0. how did you know the right question to ask?

MarkD: it’s the only articulation point in the call, so verify it’s sane

Joseph: I got the size from my view. assumed it was valid and mentally moved on.

MarkD: “everything you know is wrong” 🙂

So, how did I know what was the right question to ask? I sometimes

think of code streams in terms of articulation points—a place where interesting

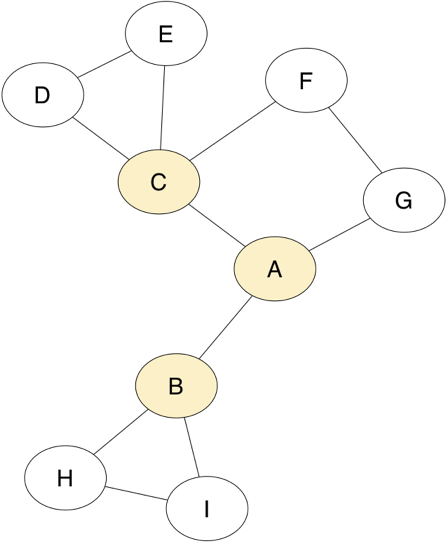

things happen. Articulation point is a concept from graph theory. It

is a node in a graph, that when removed, would split the graph into

two or more smaller graphs. Given this graph:

The nodes A, B, and C are articulation points. Remove any one of

those and the graph is split into two parts. Removing any of the

other nodes will leave a connected graph.

When I’m looking at code (or imagining code in my head), I try to

focus my attention on the articulation points. For software,

articulation points are places that change the behavior of the

system under study. It might be code flow decisions or

changes in some object’s state. In the UIGraphics case, the only

articulation point was the size being passed in.

How to get to that conclusion?

When Joseph asked his question, I was reminded of code I’d written

before draws into image contexts:

UIGraphicsBeginImageContextWithOptions(pageRect.size, YES, 0.0);

CGContextRef context = UIGraphicsGetCurrentContext();

CGContextScaleCTM(context, percent, percent);

CGContextTranslateCTM(context, -pageRect.origin.x, -pageRect.origin.y);

[self.view renderInContext: UIGraphicsGetCurrentContext()];

UIImage *viewImage = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

The first two lines of code are the only interesting ones here. They set up some

state. And after the second line of code returning nil, there’s nothing useful

that could be done anyway. So I mentally focused on them:

UIGraphicsBeginImageContextWithOptions(pageRect.size, YES, 0.0);

CGContextRef context = UIGraphicsGetCurrentContext(); // this is returning nil

UIGraphicsGetCurrentContext takes no arguments and just returns a Core

Graphics

context. It

only reacts to whatever ambient state has been set up prior to being called.

It is fundamentally uninteresting.

Therefore the only interesting code is

UIGraphicsBeginImageContextWithOptions(pageRect.size, YES, 0.0);

The last two parameters are “should the context be opaque” and “what

is the scale factor to use”. Passing 0.0 for the scale parameter is a

magic value to use the scale factor of the device’s main screen.

In fact, there’s a convenience version that just takes the size:

UIGraphicsBeginImageContext(pageRect.size);

The only thing possible that could affect subsequent calls is the size parameter.

The reason why I look far articulation points is that they’re usually

nice places that can split your problem space in to multiple pieces.

Run an

experiment

that checks the size being passed to UIGraphicsBeginImageContextWithOptions.

If the size is bad, the next step is to

track down exactly why the size is bad. If the size is good, then

you’re going to have a bad day trying to figure out what’s going wrong inside

of UIKit’s graphics machinery. Luckily

problems are pretty much all my fault (I have a Hierarchy of

Blame),

so I assume that I’ll be looking at where the size came from rather

than having to debug something deep in UIKit.

What I consider an articulation point changes depending on the behavior of the

bug and the system. Returning to my originally imagined chunk of

code:

UIGraphicsBeginImageContextWithOptions(pageRect.size, YES, 0.0);

CGContextRef context = UIGraphicsGetCurrentContext();

CGContextScaleCTM(context, percent, percent);

CGContextTranslateCTM(context, -pageRect.origin.x, -pageRect.origin.y);

[self.view renderInContext: UIGraphicsGetCurrentContext()];

UIImage *viewImage = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

What if my bug was that the contents of the image were drawn in the wrong

place? Creating the image context is no longer interesting to

me. It works. The articulation points that control the drawing are the two

transformation matrix calls, so those would be the place where I’d

start asking the questions. Questions like “do I have the order of matrix

operations correct?” and “are the scale and translation values

reasonable?”

To answer Joseph’s question from earlier, I knew to ask that

question because, after boiling everything down, it was the only

question I could ask. It’s kind of nice not really having any choices.

Does that mean Joseph is a dummy for not

realizing it? Of course not. I happened to have the luxury of coming

in to a bug at the latest possible step after a lot of legwork had

been done. He had already waded through tons of other code and

possibilities, and got sidetracked with unrelated details, like we all do.

Our introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

The Combine framework in Swift is a powerful declarative API for the asynchronous processing of values over time. It takes full advantage of Swift...

SwiftUI has changed a great many things about how developers create applications for iOS, and not just in the way we lay out our...