Swift Regex Deep Dive

iOS MacOur introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

I’ve talked about stochastic profiling in the past, such as the fairly recent Rock Heads. It’s something I mention when I talk about debugging or performance tuning at various conferences. Interestingly enough, I had a need for it last night because I had stumbled into an often-reported, difficult-to-reproduce problem and wasn’t in a situation to hook up Instruments.

What is stochastic profiling? Interrupting your program in the debugger, and noticing what’s on the stack. Resume your program, and interrupt it again. After a couple of rounds of this, you might have a clue as to what your performance problem is. You could do a couple of rounds of stochastic profiling in the time it takes for Instruments to crank up.

(If you’re not interested in the ramblings of an old man about his personal enlightenment with stochastic profiling, skip on down to the “Roominating” section.)

Most Mac and iOS programmers have never had the thrill and privilege of programming for Mac OS 9 and earlier. I actually started programming Macs when the original 128Ks came out. A machine with 128K of memory. That’s K. Not M. Not G. Some icons won’t fit into 128K. This 128K was shared amongst several applications, the “OS” (as little as there actually was), and the frame buffer. There was 64K of ROM packed with library code. But overall an extremely memory-tight system for what it did. You can read some of the technical history over at Andy Hertzfeld’s Folklore.org.

One of the things the original Mac lacked was memory protection. A stray pointer error could, and most likely would, trash the OS’s memory and either lock up the machine or force a reboot. Sometimes it would make the computer speaker shriek like a banshee. That’s a wonderful sound to hear at 3am. Imagine every pointer error you make requires rolling a d20 saving throw, and if you miss it, you have to reboot.

One of the tools in our development arsenal were system debuggers. These hooked into the OS interrupt vectors. Sometimes these debuggers would still be working even if the system went down. But usually they were arcane, and user-hostile. One such debugger was The Debugger by Steve Jasik. The Debugger was rather odd, but had the most Mac-like UI of any of the system debuggers at the time. It became my debugger of choice. Type a secret keystroke and instantly you were in the debugger where you could poke around your app or the entire system.

The premier development tool at the time was Metrowerks CodeWarrior (Be warned, nostalgia attack on the parent page of that screenshot). It had a great profiler, similar to the Instruments Time Profiler. It just had two limitations – it couldn’t profile code in shared libraries. It couldn’t profile code in a threaded application. Just the concept of threads were a deep dark mystery in those days.

At the time, 1996 or so, I was working at AOL in their Information Provider Tools group creating tools for big publishers to create and edit their areas on AOL for the members to spend time in. We were working on a site visualization tool – a nice fancy tree view. It was beautiful. My co-workers Scott and John had done a masterful job displaying the contents of the publisher’s corner of AOL.

First problem. Scrolling was terrible. It wasn’t slow, it was majestic. We couldn’t ship it.

Second problem. Our tool was a plug-in. A plug-in for a threaded program. So much for profiling it with the nice profiler.

Being a good engineer, Scott immediately took the blame, assuming it was his layout algorithm. “It must be exponential time or something” and buried himself in his office for a couple of days, re-engineering and optimizing the layout algorithm. It did get faster, but by percentage points and not the orders of magnitude we needed.

I had a little down time, and was bored. I ran the program, visited a big publisher’s area, and started scrolling. Curious as to what was actually happening, I hit The Debugger’s key combo. I saw Scott’s layout algorithm on the stack, near the middle, and some string stuff at the top. Resumed the app. Hit the debugger again. Very similar stack – string stuff at the top. Again and again and again.

I had just (re-)invented stochastic profiling. Interrupt an application in the debugger and look at the stack traces. It’s definitely not rocket surgery. The hardest part is remembering that you can do it. Back to the bug – every time I randomly interrupted the app, the same string stuff as at the top of the stack:

TString timestamp =

month + “/” + day + “/” + year + “ “ + hours

+ “:” + minutes + “:” + seconds;

It was a big C++ program. TString is our string class, which did all sorts of fancy stuff like dynamically-sized strings (no more 255 character limit!), convenient concatenation with the plus sign, and automatic conversion from static C literals and integers to TStrings.

Remember that machines of that era, while roomier than the original 128K and 512K macs, were still pretty puny compared to the supercomputers we’re carrying around in our pockets to play Angry Birds on. The less work you can do, the better. This particular string construction was doing quite a bit of work:

Convert five literal strings to their dynamic variety. This involved at least one dynamic allocation under the hood for each object.

Convert six integers into dynamic strings, with dynamic allocation

For each pairing of TString::operator+, a temporary TString object is created (dynamic allocation yet again), used, and destroyed implicitly. There’s something like 15 or 20 object constructions and destructions going on. For each timestamp. There could be hundreds of timestamps on the screen. These timestamps got regenned when the screen scrolled.

After conferring with the team, we decided to go old-school C and use a strftime rather than caching the string label. We obviously weren’t doing proper localization anyway, so using strftime was fine. Suddenly the scrolling tree view was responsive. Given Scott’s improvements to the algorithm, scrolling was downright liquid.

During classes and talks, this story is my object lesson why we must measure before doing any performance tuning. We as developers are a pretty smart breed. We have to be to keep all this jazz in our brains while creating a working program. Strange performance problems (and strange bugs too for that matter) mean we don’t have a correct, or even complete mental model of the system to base our fixes on. Otherwise we would have fixed the problem already!

If your usual measuring tools aren’t working, cook up some new ones. Stochastic profiling in the debugger. Sprinkle BNRTimeBlocks around. Add some caveman debugging with timestamps.

So is such a profiling technique useful in today’s world of high-powered Instruments™ and crash-proof operating system? Amazingly enough, yes. I was adding some features for an upcoming release of Roominant, the Ranch’s meeting room reservation system. Part of this feature involved doing some work when the application has been idle for more than five minutes or so. After some burn-in testing, and right before running out the door for an orchestra rehearsal, I scrolled the main view of the program, and scrolling was really choppy.

OMG! We’ve had reports of this bug, but so far it had eluded the development team. We didn’t have any idea what the problem was. Too many network requests in-flight? Too many meetings on the main scroll view? The user belongs to too many organizations? Strange interactions with EventKit? Ideally I’d just re-run the app in Instruments with the Time Profiler to see what was consuming CPU time, but I hadn’t been able to reproduce the problem before. But now the app was misbehaving perfectly. Sometimes you can attach Instruments to a running app on a device, but that usually fails for me if I have the debugger attached to the application too. I didn’t want to risk losing my app’s state if the tools got into a snit.

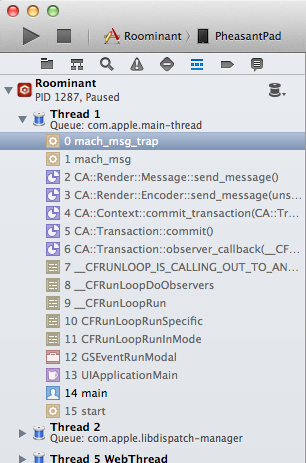

Because I was in the debugger, I just scrolled Roominant with one hand on an iPad and clicked the pause button in the Xcode debugger console with the other. Glance at the stack trace, and then click continue. After about four or five of these cycles, a common stack trace was appearing:

Only two stack traces ever showed up for me during this profiling run: CFRunloop waiting for events, and this stack with CA::Render calls. CA for Core Animation, which implies that I’m abandoning layers. Tons of these layers are getting redrawn leading to the terrible scrolling. Most likely the views that the layers back are also getting abandoned. That’s absolutely huge news. I can ignore everything else in the app except for layer and view manipulations

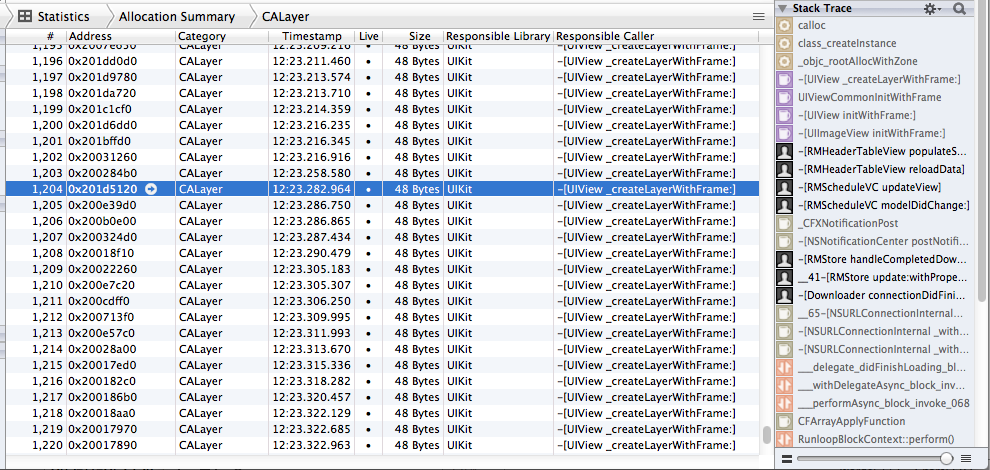

After orchestra, I couldn’t sleep because the solution was so close. I ran Instruments with the Leaks template, which includes the Allocations instrument as well. I didn’t even bother with the Time Profiler instrument because I had narrowed the problem down already to layers and/or views. I exercised the app for a while, doing things that should maximize view operations, and got a beautiful smoking gun:

Houston, we have a problem. I quickly got stack traces and found the cause – we were making image views for the grid lines on the main meeting room schedule page and adding them to the superview upon a refresh. The view removal code just never got hooked up, causing these image views to stack up. The problem never manifested itself really until thousands of views accumulated, which didn’t happen under typical developer application build-run-crash lifetimes.

The stochastic profiling took all of five minutes to do. This pointed me to the tools to use in Instruments to find the problem. Another five minutes to find the stack trace. Fixing the problem took a little longer, just to make sure we weren’t making similar errors.

Stochastic profiling is definitely a blunt-instrument caveman technique. “Mongo stop app and look.” But it’s very fast, and sometimes can point you exactly in the direction you need to look with slower, more sophisticated tools.

Our introductory guide to Swift Regex. Learn regular expressions in Swift including RegexBuilder examples and strongly-typed captures.

The Combine framework in Swift is a powerful declarative API for the asynchronous processing of values over time. It takes full advantage of Swift...

SwiftUI has changed a great many things about how developers create applications for iOS, and not just in the way we lay out our...